In a significant move that underscores the evolving relationship between humans and artificial intelligence, OpenAI has begun rolling out a new "Trusted Contact" feature for its flagship chatbot, ChatGPT. This development serves as compelling evidence of the profound emotional reliance many users are forming with AI systems, extending far beyond mere productivity tasks into the realm of personal decision-making and emotional support. The feature is designed as a safety mechanism, allowing users to designate an individual who will be alerted if ChatGPT’s systems detect interactions that suggest a serious risk of self-harm.

The genesis of this feature can be traced back to observations made by OpenAI’s CEO, Sam Altman. Speaking at Sequoia Capital’s AI Ascent event in May of the previous year, Altman highlighted a striking trend: younger generations are increasingly utilizing ChatGPT not merely as a tool for work or information retrieval, but as an "operating system for life." This includes seeking advice on significant personal decisions, a revelation that points to a deeper, more intimate integration of AI into daily existence than initially conceived. Altman remarked on the impressive capabilities of the AI but also noted the intriguing phenomenon of users making life choices only after consulting ChatGPT. This candid assessment from the head of the company behind one of the world’s most ubiquitous AI platforms signals a pivotal shift in how AI is perceived and used by the public, moving from a computational assistant to a quasi-confidant.

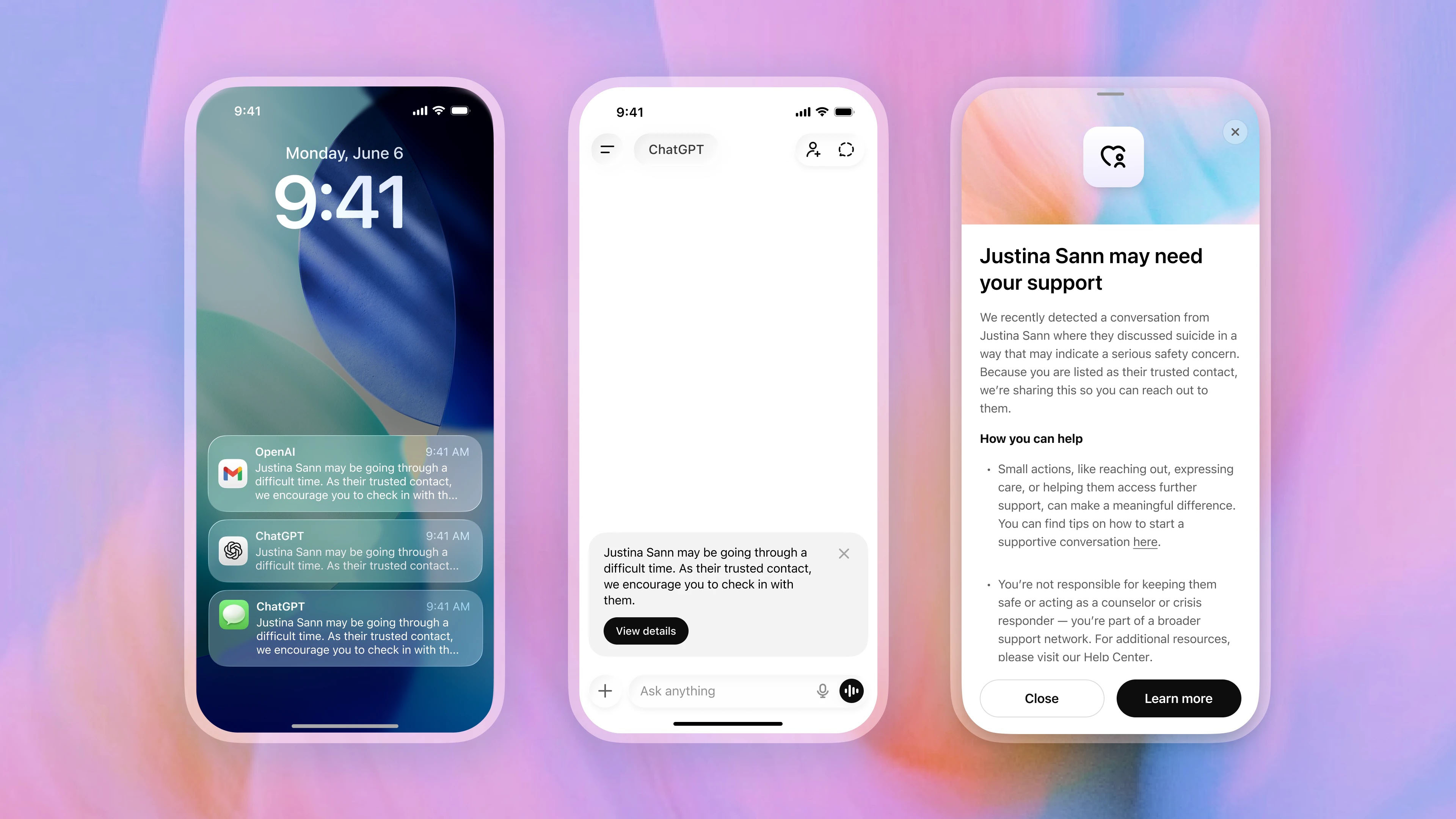

The Trusted Contact feature is currently being deployed progressively across the user base, meaning it may not yet be universally available. Users can access and activate it by navigating to their profile name within the ChatGPT interface and then selecting "Settings." The process involves nominating a trusted adult contact, who must formally accept this role before the safety feature becomes active. This two-step verification ensures that the designated individual is aware of their responsibility and willing to act as a point of contact in critical situations.

Once activated, the system operates with a multi-layered approach to user safety. If ChatGPT’s sophisticated automated systems identify patterns or expressions within a user’s conversations that indicate a potential for serious self-harm, the user is first presented with a warning. This warning informs them that their Trusted Contact might be notified and strongly encourages them to initiate contact with that individual themselves. This immediate prompt prioritizes user agency and direct human connection. Following this initial alert, a specialized human review team, trained specifically for such sensitive situations, meticulously assesses the conversation. This human oversight is crucial, acting as a vital check against false positives and ensuring that any intervention is warranted and handled with the utmost care. Only after this rigorous human review confirms a genuine safety concern is an alert dispatched to the Trusted Contact. This notification can take the form of an email, a text message, or an in-app alert, prompting them to check in on the user.

Crucially, OpenAI has emphasized its commitment to user privacy in the implementation of this feature. The alerts sent to Trusted Contacts do not include chat transcripts or detailed conversation history. This safeguard is designed to protect the user’s private interactions with ChatGPT, ensuring that while a safety net is provided, the content of their personal exchanges remains confidential. Furthermore, users retain full control over the feature, with the ability to remove or change their Trusted Contact at any time, reflecting a balance between safety and individual autonomy.

The Ethical Imperative: AI Safety and Mental Health Collaboration

The development of the Trusted Contact feature was not undertaken in isolation. OpenAI states that its creation involved extensive consultation with a diverse group of experts, including mental-health professionals, suicide-prevention specialists, and a global network of over 260 doctors spanning 60 countries. This multidisciplinary input highlights a growing recognition within the AI industry that the impact of their technologies extends beyond technical performance to profoundly influence human well-being. This collaborative approach aligns with a broader industry trend towards embedding ethical considerations and safety protocols into AI development from the outset.

The feature can be seen as an extension of OpenAI’s existing suite of safety measures, which includes various parental controls and built-in safety guardrails. These collective efforts signal a significant shift in how AI companies perceive their responsibilities. It moves beyond simply building powerful tools to actively acknowledging and mitigating the emotional and psychological effects their products can have on users. This acknowledgment is particularly salient given the recent public discourse around AI’s capabilities. While OpenAI has recently focused on showcasing ChatGPT’s productivity enhancements, such as the Codex tool for code generation, the simultaneous introduction of features like Trusted Contact reveals a dual awareness: AI as a powerful workhorse and AI as a deeply personal, potentially emotionally impactful entity.

Reactions and Concerns: A Double-Edged Sword?

The introduction of the Trusted Contact feature has elicited a spectrum of reactions, oscillating between reassurance and apprehension. For many, it represents a necessary and commendable step by OpenAI to address the unforeseen emotional intimacy users have developed with AI. Proponents view it as a proactive measure, demonstrating a responsible approach to AI deployment, especially as the technology becomes more sophisticated and integrated into daily life. It acknowledges the real-world implications of AI interaction, particularly for vulnerable individuals who might turn to AI for solace or advice in moments of crisis.

However, the feature also raises pertinent questions and concerns, particularly regarding privacy and the psychological impact of being monitored by an AI. The notion of an AI system constantly analyzing conversations for signs of distress, even with human oversight, can be unsettling for some. This sentiment was echoed by Amy Sutton from Freedom Counselling, who, in an interview concerning AI monitoring tools in the workplace, noted the potential for such systems to exacerbate mental health struggles. Sutton argued that in environments where mental health stigmas persist, the awareness of AI observation could inadvertently lead individuals to greater efforts to conceal their struggles. This "dangerous spiral," as she termed it, suggests that the very act of monitoring, even with benevolent intent, could ironically worsen the problem it aims to solve by fostering a sense of distrust or the need for secrecy.

The delicate balance between providing a safety net and infringing on user privacy or autonomy is a critical ethical challenge for AI developers. While OpenAI’s efforts to exclude chat transcripts from alerts are a significant step towards protecting privacy, the fundamental act of an AI system flagging emotional distress still raises questions about data interpretation, potential biases in detection algorithms, and the broader implications for digital surveillance. Users may wonder about the criteria used by the AI to identify risk, the transparency of the human review process, and the potential for misinterpretation in highly nuanced human conversations.

Broader Implications for the Human-AI Relationship

The Trusted Contact feature is more than just a new function; it is a profound marker of AI’s evolving role in society. It highlights the growing recognition that AI is not merely a collection of algorithms but an interactive entity with which humans form complex, sometimes intimate, relationships. This development compels a re-evaluation of what constitutes "AI responsibility" and what ethical frameworks are necessary as AI systems become increasingly integrated into the fabric of human experience.

The feature also implicitly acknowledges the "para-social" relationships users can form with AI chatbots. As AI models become more conversational, empathetic (or simulate empathy convincingly), and accessible, users may increasingly project human-like qualities onto them, turning to them for support that traditionally came from human relationships. While AI can offer immediate, non-judgmental responses, it lacks genuine understanding, lived experience, and the capacity for true emotional connection that human interactions provide. The Trusted Contact feature, by bringing a human into the loop, attempts to bridge this gap, ensuring that real-world support is available when AI reaches its limits.

Looking ahead, this move by OpenAI could set a precedent for other AI companies. As AI assistants become more sophisticated and widely adopted, similar safety features might become standard across the industry, necessitating further collaboration with mental health experts and ethicists. This could lead to a more regulated landscape for AI development, particularly concerning applications that touch on sensitive areas like mental health. The ongoing challenge will be to innovate responsibly, ensuring that technological advancements are accompanied by robust ethical safeguards that protect user well-being and privacy.

Ultimately, whether the Trusted Contact feature is perceived as reassuring or unsettling largely depends on an individual’s existing perspective on AI and its role in their lives. However, its very existence undeniably solidifies a critical realization: AI platforms like ChatGPT are no longer just advanced tools for information and productivity. They are increasingly becoming systems upon which people rely emotionally, navigating some of life’s most vulnerable moments. This profound shift necessitates a continuous, thoughtful dialogue about the ethical responsibilities of AI creators and the societal implications of welcoming intelligent machines into the most intimate corners of human existence. The journey towards building truly beneficial and safe AI is complex, and the Trusted Contact feature is but one significant step in that ongoing evolution.