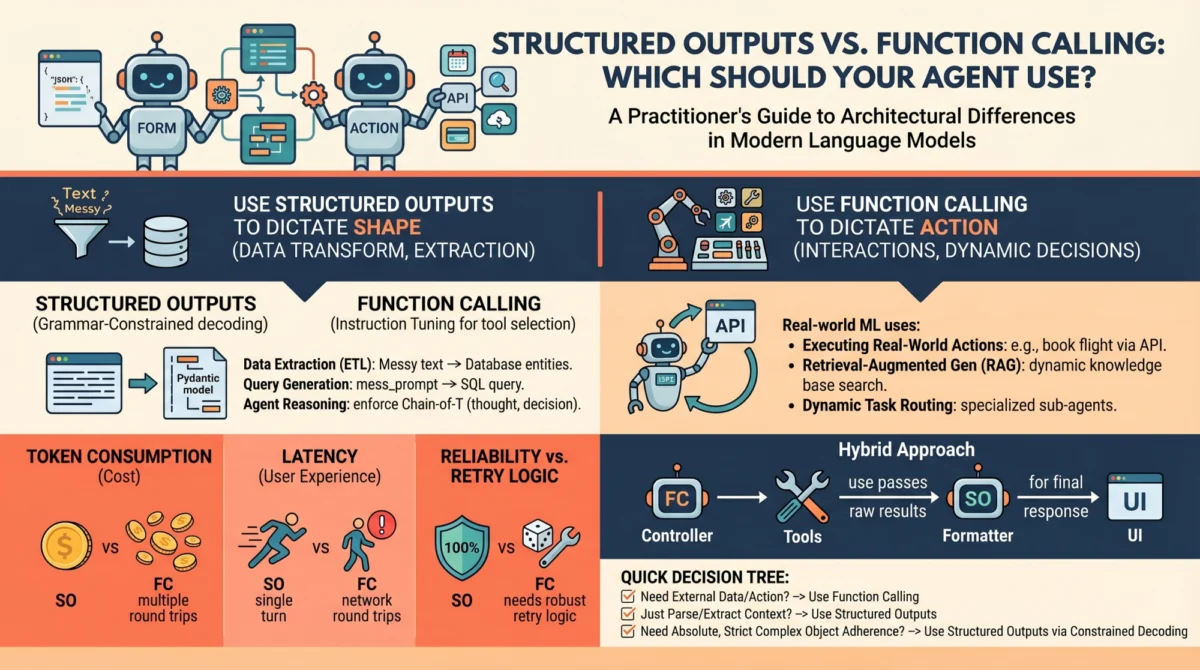

The transition of large language models from conversational novelties to foundational components of enterprise software has necessitated a move away from unstructured text toward deterministic, machine-readable interfaces. As developers shift toward building autonomous agents and complex data pipelines, the distinction between two primary architectural patterns—structured outputs and function calling—has become a critical consideration for system reliability and cost-efficiency. While both mechanisms leverage JSON schemas to constrain model behavior, they serve fundamentally different roles within an application’s logic. Understanding these nuances is no longer optional for machine learning practitioners; it is a prerequisite for building software that can withstand the rigors of production environments.

The Evolution of Model Control: A Chronological Overview

The journey toward reliable model outputs has been marked by three distinct eras of development. In the early stages of the LLM explosion, roughly spanning 2022 to mid-2023, developers relied almost exclusively on prompt engineering. This era, often characterized by the "stochastic parrot" problem, required developers to use phrases like "return only JSON" or "do not include conversational filler." Despite these instructions, models frequently hallucinated extra text or failed to close brackets, leading to fragile parsers and high failure rates in automated systems.

The second era began in June 2023, when OpenAI introduced "Function Calling" for GPT-3.5 and GPT-4. This was a watershed moment that moved the industry toward "tool use." Instead of just generating text, models were fine-tuned to output specific JSON objects that matched a provided function signature. This allowed models to act as "controllers" for external software.

The third and most recent era arrived in late 2023 and throughout 2024 with the introduction of "JSON Mode" and, subsequently, "Structured Outputs." These features, now standard across major providers including Anthropic (with Tool Use) and Google Gemini, utilize grammar-constrained decoding. Unlike previous methods that relied on the model "trying" to follow instructions, these newer systems mathematically prevent the model from generating any token that would violate a predefined schema.

Mechanics of Structured Outputs: Grammar-Constrained Decoding

To appreciate the reliability of structured outputs, one must look at the underlying inference process. Standard language models generate text by predicting the next most likely token from a vast vocabulary. In a typical generation, any token in the vocabulary has a non-zero probability of being selected.

Structured outputs change this through a process known as logit masking or grammar-constrained decoding. When a developer provides a JSON schema (often via a Pydantic model or a JSON schema definition), the system constructs a finite state machine (FSM) or a context-free grammar (CFG) that represents every valid sequence of tokens allowed by that schema. At each step of the generation, the system identifies which tokens are valid according to the grammar. All invalid tokens have their probabilities set to zero (masked out).

For example, if the schema requires a boolean value after the key "is_active":, the model is physically unable to generate anything other than "true" or "false." This level of constraint ensures 100% schema compliance, effectively eliminating the need for retry logic or defensive parsing in the application layer. This makes structured outputs the ideal choice for data transformation tasks, such as extracting entities from a legal document or converting a customer’s rambling email into a standardized support ticket.

Function Calling: The Engine of Agentic Autonomy

While structured outputs focus on the "shape" of the data, function calling is designed to manage the "flow" of an application. Function calling is not merely about formatting; it is an interaction pattern that allows a model to signal that it needs to pause and wait for external information or perform an action in the physical or digital world.

The mechanics of function calling involve a multi-turn dialogue. The developer provides the model with a "toolbox"—a list of available functions with descriptions of what they do and what parameters they require. During inference, if the model determines that it cannot fulfill a user’s request without external help, it generates a "call" instead of a final answer. This call contains the name of the function and the arguments required to run it.

The execution of the function happens outside the model, typically on the developer’s server. Once the function returns a result (such as data from a SQL database or a weather API), that result is fed back into the model as a new message. The model then synthesizes this information to provide a final response to the user. This "reasoning-action-observation" loop is what defines an autonomous agent.

Comparative Analysis: Latency, Reliability, and Cost

The choice between these two architectures has significant implications for the performance and unit economics of an AI-driven product. Practitioners must weigh the benefits of each based on three primary metrics:

1. Latency and Throughput

Structured outputs are generally faster because they are designed for single-turn interactions. The model takes the input and produces the output in one pass. Function calling, by its nature, is multi-turn. A single user request might involve several "round trips" between the model and the external tools. For real-time applications, such as a voice assistant or a high-speed data processing pipeline, the cumulative latency of these round trips can be prohibitive.

2. Reliability and Error Handling

While structured outputs offer near-perfect schema compliance, they do not guarantee the accuracy of the data itself—only its format. Function calling introduces a different set of reliability challenges. The model must correctly "choose" the right tool and "decide" when it has enough information to stop calling tools. Industry data suggests that as the number of available tools increases, the "selection accuracy" of models can degrade, a phenomenon often referred to as "tool-use fatigue."

3. Token Consumption and Cost

Function calling is inherently more expensive. Each time the model is called in a multi-turn loop, the entire conversation history—including the tool definitions and previous tool outputs—must be re-sent to the API. This leads to exponential token growth. Structured outputs, being single-turn, keep the context window lean and the costs predictable.

Industry Use Cases and Strategic Implementation

To assist developers in choosing the right path, industry leaders have identified clear boundaries for each technology.

When to Use Structured Outputs:

- Data Extraction: Converting a PDF of a medical record into a structured JSON for a database.

- Classification: Labeling 10,000 product reviews as "positive," "negative," or "neutral" with specific sub-categories.

- Syntactic Transformation: Taking a raw transcript and formatting it into a standardized meeting summary with bullet points for "action items" and "attendees."

When to Use Function Calling:

- Dynamic Information Retrieval: A bot that needs to check a live inventory database before answering if a product is in stock.

- Action Execution: An agent that can actually "book" a flight or "send" an email on behalf of the user.

- Reasoning Chains: Complex tasks where the model must perform a step, look at the result, and then decide the next step (e.g., a coding assistant that runs a test, sees an error, and then tries to fix the code).

The Practitioner’s Decision Tree

The consensus among AI architects is to follow a "path of least resistance." If a task can be accomplished with a single-turn structured output, that should be the default choice. Practitioners are encouraged to run through a three-step checklist:

- Is external data required? If the model needs data not present in its training set or the current prompt, function calling is required.

- Is an external action required? If the system needs to change the state of the world (delete a file, charge a credit card), function calling is the mechanism.

- Is the task purely about formatting? If the model is simply reshaping known data, structured outputs are the superior, more efficient choice.

Broader Impact and Future Implications

The stabilization of these two architectural patterns marks a shift in the AI industry from "prompting" to "programming." We are seeing the emergence of a new "AI Middleware" layer—tools like Pydantic, Instructor, and Outlines—that act as a bridge between the fuzzy logic of LLMs and the rigid logic of traditional software.

As models become more capable, the boundary between these two methods may continue to blur. OpenAI’s implementation already uses structured output technology to ensure that function calls match their intended signatures. However, the conceptual distinction remains vital. Structured outputs represent the "type system" of the AI world, ensuring data integrity. Function calling represents the "API" of the AI world, enabling interaction and agency.

In the long term, the widespread adoption of these techniques is expected to fuel the "Agentic Workflow" movement. According to recent market analysis, the shift toward autonomous agents could automate up to 40% of routine software-based tasks by 2030. This transition relies entirely on the model’s ability to communicate reliably with other software systems. By mastering the distinction between structured outputs and function calling, developers are not just building better chatbots; they are building the infrastructure for the next generation of autonomous computing.