Princeton University researchers have achieved a significant milestone in the convergence of biology and engineering, developing a three-dimensional neural network device that seamlessly integrates living brain cells with sophisticated embedded electronics. This groundbreaking 3D bioelectronic computer has been successfully programmed to differentiate patterns using advanced computational techniques, marking a pivotal step toward understanding the brain’s inherent computing power and addressing critical bottlenecks in artificial intelligence. The discovery, detailed in a paper published in the esteemed journal Nature Electronics, opens new avenues for both fundamental neuroscience research and the development of next-generation, energy-efficient AI systems.

A New Paradigm in Bioelectronic Computing

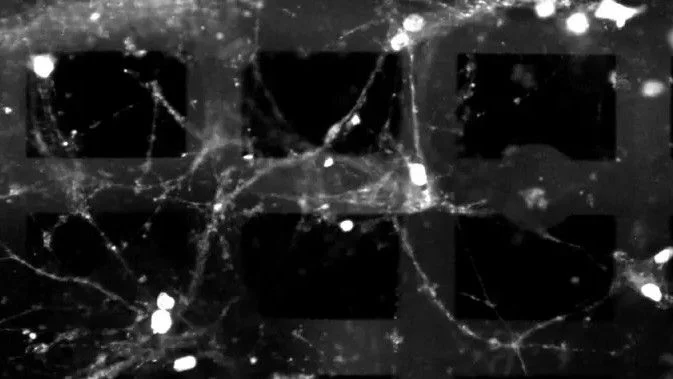

At its core, the Princeton innovation represents a novel approach to bioelectronic computing, where living brain cells are not merely observed but actively participate in computational tasks within a carefully engineered electronic scaffold. Unlike previous attempts that often involved cultivating two-dimensional cell cultures in petri dishes or monitoring three-dimensional neural clusters (organoids) from an external vantage point, this device embeds the electronics directly within the living tissue. This intimate integration allows for more precise control, stimulation, and real-time monitoring of neural activity, mimicking the intricate interplay found in biological brains.

The immediate implications of this technology are multifaceted. For neuroscience, it provides an unprecedented platform to dissect the complex computational secrets of the brain in a controlled, in-vitro environment. "The technology could not only help uncover the computing secrets of the brain but can also assist in understanding and possibly treating neurological diseases," stated Kumar Mritunjay, the paper’s first author and a postdoctoral researcher in electrical and computer engineering. This capability is crucial for modeling diseases like Alzheimer’s, Parkinson’s, and epilepsy, enabling researchers to observe disease progression at a cellular level and test potential therapeutic interventions with greater accuracy than traditional methods.

Addressing AI’s Looming Energy Crisis

Beyond its profound contributions to neuroscience, the research team quickly recognized the potential of their bioelectronic system to tackle one of the most pressing challenges facing the artificial intelligence industry today: power consumption. As AI models grow exponentially in size and complexity, their energy demands have soared, raising concerns about sustainability, operational costs, and environmental impact.

"The real bottleneck for AI in the near future is energy," remarked Tian-Ming Fu, an assistant professor of Electrical and Computer Engineering and a key member of the research team. He further highlighted the stark contrast between biological and artificial intelligence in terms of efficiency: "Our brain consumes only a tiny fraction – about one millionth – of the power consumed by today’s AI systems to perform similar tasks." This staggering difference underscores the urgent need for more energy-efficient computational paradigms, and the Princeton device offers a tangible pathway to explore and replicate the brain’s unparalleled efficiency.

The Evolution of Bio-Inspired Computing: A Brief Chronology

The journey towards integrating living biological components with electronics has been a gradual yet persistent endeavor, spanning several decades. Early pioneers in the 20th century began experimenting with basic electrical stimulation of neural tissues to understand their responses. However, the true convergence of biology and computation began to gain momentum in the late 20th and early 21st centuries with advancements in microfabrication, neuroscience, and computational modeling.

- 1970s-1980s: Initial forays into culturing neurons on silicon chips, primarily for studying basic neuronal activity and connectivity. These were largely two-dimensional setups.

- 1990s-2000s: Development of multi-electrode arrays (MEAs) allowed for more sophisticated recording and stimulation of neural networks in vitro. The concept of "brain-on-a-chip" began to emerge, aiming to mimic simple brain functions.

- 2010s: Significant progress in creating 3D neural cultures, known as organoids, which provided a more physiologically relevant environment for neurons. Researchers started to probe these organoids for computational capabilities, often still relying on external interfaces. Companies like Cortical Labs began exploring the commercial potential of biological computing, developing systems where human brain cells perform computational tasks, aiming to address the AI energy crisis.

- Present Day (2020s): The Princeton breakthrough marks a crucial leap by integrating the electronics directly within the 3D biological matrix. This direct embedding is a game-changer, moving beyond external probing to a more symbiotic relationship between living cells and artificial components, facilitating true bioelectronic computation.

This chronological progression highlights a continuous drive to harness the inherent processing power of biological systems, moving from simple observation to direct integration and functional programming.

The Technical Ingenuity: A Closer Look at the 3D Integration

While specific technical details of the Princeton device are proprietary and subject to ongoing research, the general principles behind such advanced bioelectronic integration involve several key components and methodologies. The creation of a functional 3D bioelectronic neural network demands expertise across multiple disciplines, including material science, microfabrication, neurobiology, and electrical engineering.

The "embedded electronics" likely refer to highly specialized micro-electrodes, sensors, and possibly even miniature signal processing units fabricated directly into a biocompatible scaffold. This scaffold, often made from polymers or hydrogels, provides the structural support for the living brain cells to grow and form intricate connections in three dimensions, mimicking the architecture of brain tissue.

- Biocompatibility: The materials used for the electronic components and the scaffold must be entirely non-toxic to the neurons, allowing them to thrive and function naturally for extended periods.

- Microfluidics: Advanced microfluidic channels might be incorporated to deliver nutrients, remove waste products, and maintain a stable physiological environment for the cells.

- Interfacing: The critical challenge lies in creating a stable and high-bandwidth interface between the biological neurons and the electronic circuits. This involves designing electrodes at a nanoscale that can both record the minute electrical signals (action potentials) generated by neurons and deliver precise electrical stimulation to modulate their activity.

- Computational Techniques: The programming to "differentiate patterns" would involve sophisticated algorithms that translate external inputs into electrical stimuli for the neurons and interpret the resulting neural activity as computational outputs. This could draw from principles of neuromorphic computing, which seeks to emulate brain structures and functions, and apply machine learning techniques to train the biological network. The 3D nature allows for a greater density of connections and more complex network topologies, leading to richer computational capabilities than 2D cultures.

Supporting Data: The Scale of AI’s Energy Footprint

The urgency of addressing AI’s energy consumption cannot be overstated. The rapid growth of artificial intelligence, particularly in areas like large language models (LLMs) and deep learning, has led to an unprecedented demand for computational power.

- Training LLMs: Training a single large language model, such as OpenAI’s GPT-3, is estimated to consume vast amounts of energy, potentially equivalent to the carbon footprint of several transatlantic flights or even hundreds of tons of CO2 emissions. Subsequent, larger models like GPT-4 would demand even more.

- Data Centers: The global network of data centers, which house the servers for AI computations, already accounts for a significant portion of global electricity consumption, estimated to be around 1-2% of total worldwide electricity usage. This figure is projected to rise substantially with the continued expansion of AI and cloud computing.

- Hardware Demands: Current AI hardware, predominantly based on Graphics Processing Units (GPUs) and specialized AI accelerators, is highly power-intensive. While efficient for parallel processing, they are still orders of magnitude less efficient than the human brain, which operates on approximately 20 watts – roughly the power of a dim light bulb.

This immense energy expenditure not only contributes to climate change but also presents a financial barrier for AI development and deployment, especially for smaller research institutions and companies. The Princeton research directly confronts this challenge by seeking to uncover the biological mechanisms that enable such extraordinary energy efficiency.

Broader Impact and Future Implications

The Princeton team’s achievement carries profound implications across several domains:

1. Advancements in Neuroscience and Neurological Disease Treatment:

- Disease Modeling: The integrated 3D bioelectronic device provides a superior platform for creating "disease-in-a-dish" models that more accurately reflect the complexity of the human brain. This allows for detailed studies of disease mechanisms, drug screening, and personalized medicine approaches.

- Fundamental Brain Research: Researchers can precisely control and monitor neural circuits, dissecting how memories are formed, how learning occurs, and how information is processed at a fundamental level. This could unlock long-standing mysteries of brain function.

- Drug Discovery: The platform can accelerate the discovery and testing of new pharmaceutical compounds for neurological disorders, reducing reliance on animal models and potentially shortening drug development timelines.

2. Revolutionizing Artificial Intelligence and Computing:

- Ultra-Low-Power AI: By reverse-engineering the brain’s energy efficiency, the research could inspire a new generation of neuromorphic hardware that consumes significantly less power. This could lead to AI devices that are truly portable, sustainable, and capable of continuous operation.

- Bio-Inspired Algorithms: Insights gained from how biological neural networks compute can inform the development of novel AI algorithms that are more robust, adaptable, and efficient, moving beyond current deep learning paradigms.

- Edge AI: The ability to run complex AI tasks with minimal power would be transformative for "edge AI" applications, where intelligence is deployed directly on devices (e.g., smart sensors, autonomous vehicles, wearables) without constant reliance on cloud connectivity.

3. Enhancing Brain-Computer Interfaces (BCIs) and Prosthetics:

- The deeper understanding of neural signaling and the development of highly integrated bioelectronic interfaces could pave the way for more sophisticated and intuitive brain-computer interfaces, offering new hope for individuals with paralysis or neurological impairments.

- Future prosthetic limbs could be controlled with unprecedented precision and naturalness by directly interfacing with biological neural networks.

4. Ethical Considerations and Societal Dialogue:

- As bioelectronic systems become more complex and capable, discussions around the ethics of creating hybrid biological-digital entities will become increasingly important. Questions regarding consciousness, identity, and the boundaries of life will need to be addressed thoughtfully and proactively.

- The potential for "synthetic sentience" in highly advanced bio-computers, while currently theoretical, warrants early consideration and the establishment of robust ethical guidelines.

Official Responses and the Scientific Community’s Outlook

The publication of this research in Nature Electronics signifies its high impact and rigorous scientific validation. The scientific community generally views such interdisciplinary breakthroughs with immense excitement, recognizing their potential to redefine the boundaries of what is possible at the intersection of biology and technology.

While official responses from government agencies or industry leaders are not yet detailed in the original press release, it is highly probable that research funding bodies like the National Institutes of Health (NIH), the National Science Foundation (NSF), and the Defense Advanced Research Projects Agency (DARPA) will take keen interest. These agencies often support research that promises significant societal impact and national security advantages, both of which are evident in this work. Tech giants like Google, Microsoft, and Meta, who are heavily invested in AI development and face increasing pressure to make their operations more sustainable, are also likely to be closely monitoring these advancements for potential collaboration or inspiration.

The work at Princeton University represents a compelling vision for the future of computing – one where the elegance and efficiency of biological intelligence are harnessed and integrated with the precision and scalability of advanced electronics. It is a testament to human ingenuity in striving to understand the most complex machine known – the brain – and to leverage its secrets to build a more intelligent and sustainable future.

Challenges Ahead

Despite the monumental achievement, significant challenges remain on the path from laboratory proof-of-concept to widespread application.

- Longevity and Stability: Maintaining the viability and functionality of living neurons in vitro for extended periods, especially when integrated with electronics, is a complex biological engineering challenge.

- Scalability: Scaling these intricate 3D bioelectronic networks to match the complexity and capacity of even a small fraction of the human brain (billions of neurons) is a daunting task.

- Programming Complexity: Developing sophisticated algorithms to effectively "program" and interpret the highly dynamic and non-linear behavior of biological neural networks is an ongoing area of research.

- Standardization: Establishing standardized protocols for culturing, integrating, and testing these bioelectronic systems will be crucial for reproducibility and broader adoption in research and development.

Nevertheless, the Princeton team’s pioneering work lays a robust foundation for future exploration, setting a new benchmark in the nascent but rapidly evolving field of bioelectronic computing. As researchers continue to unravel the intricacies of the brain and refine the interfaces between living tissue and artificial intelligence, the promise of ultra-efficient, brain-inspired computing draws ever closer.