The landscape of European computational science reached a significant milestone with the full operational integration of MareNostrum V, a world-class supercomputer housed at the Barcelona Supercomputing Center (BSC-CNS). Located on the campus of the Polytechnic University of Catalonia, the facility presents a striking juxtaposition of architectural eras. While the 19th-century Torre Girona chapel serves as a museum for the original 2004 MareNostrum racks, the latest iteration of this technological marvel occupies a dedicated, state-of-the-art facility adjacent to the historic site. Representing a joint investment of approximately €202 million, MareNostrum V stands as one of the fifteen most powerful computational engines on the planet, serving as a cornerstone for the EuroHPC Joint Undertaking’s mission to provide Europe with a world-leading high-performance computing (HPC) ecosystem.

A New Era of Computational Power: The Transition from Legacy to MareNostrum V

The evolution of the MareNostrum series reflects the exponential growth of computing requirements in the 21st century. Since the inauguration of the first MareNostrum in 2005, which was then the most powerful computer in Europe, the BSC has consistently pushed the boundaries of processing capability. The transition to MareNostrum V marks a shift from traditional monolithic computing to a highly specialized, heterogeneous architecture designed to meet the divergent needs of modern data science, artificial intelligence, and traditional scientific simulation.

Unlike the standard cloud computing environments familiar to many data scientists—such as Amazon Web Services (AWS) or Google Cloud—high-performance computing at the supercomputer level operates under fundamentally different architectural rules. While commercial cloud platforms excel at "elastic" scaling for web services and distributed data processing, MareNostrum V is engineered for massive, tightly coupled simulations where the speed of communication between thousands of processors is as critical as the speed of the processors themselves.

Architectural Framework: The Fat-Tree Topology and Networking

At the heart of MareNostrum V’s efficiency is its networking infrastructure. In distributed computing, the network is often the primary bottleneck. To mitigate this, MareNostrum V utilizes an InfiniBand NDR200 fabric arranged in a "fat-tree" topology. This networking strategy is designed to prevent congestion by increasing the bandwidth of the links as they move up the network hierarchy.

In a standard office or data center network, multiple nodes often share a single uplink, leading to congestion when all nodes attempt to communicate simultaneously. In a fat-tree topology, the "branches" near the "trunk" of the network are physically and logically wider, ensuring non-blocking bandwidth. This allows any of the system’s thousands of nodes to communicate with any other node at minimal latency, a requirement for large-scale neural network training and complex fluid dynamics simulations.

Technical Specifications: The General Purpose and Accelerated Partitions

The computational power of MareNostrum V is divided into two primary partitions, each tailored for specific types of research:

1. The General Purpose Partition (GPP):

Designed for highly parallel CPU-intensive tasks, the GPP contains 6,408 nodes. Each node is equipped with 112 Intel Sapphire Rapids cores, providing a combined peak performance of 45.9 Petaflops (PFlops). This partition is the workhorse for traditional scientific computing, including climate modeling, genomic sequencing, and complex engineering simulations that require vast amounts of serial and parallel CPU processing.

2. The Accelerated Partition (ACC):

Focusing on the burgeoning fields of artificial intelligence and molecular dynamics, the ACC partition is one of the most advanced of its kind. It comprises 1,120 nodes, each outfitted with four NVIDIA H100 SXM GPUs. Given that a single H100 GPU represents a significant capital investment, the GPU cluster alone accounts for over €110 million of the machine’s total cost. This partition reaches a peak performance of 260 PFlops, making it an essential tool for training large language models (LLMs) and conducting high-fidelity simulations that benefit from hardware acceleration.

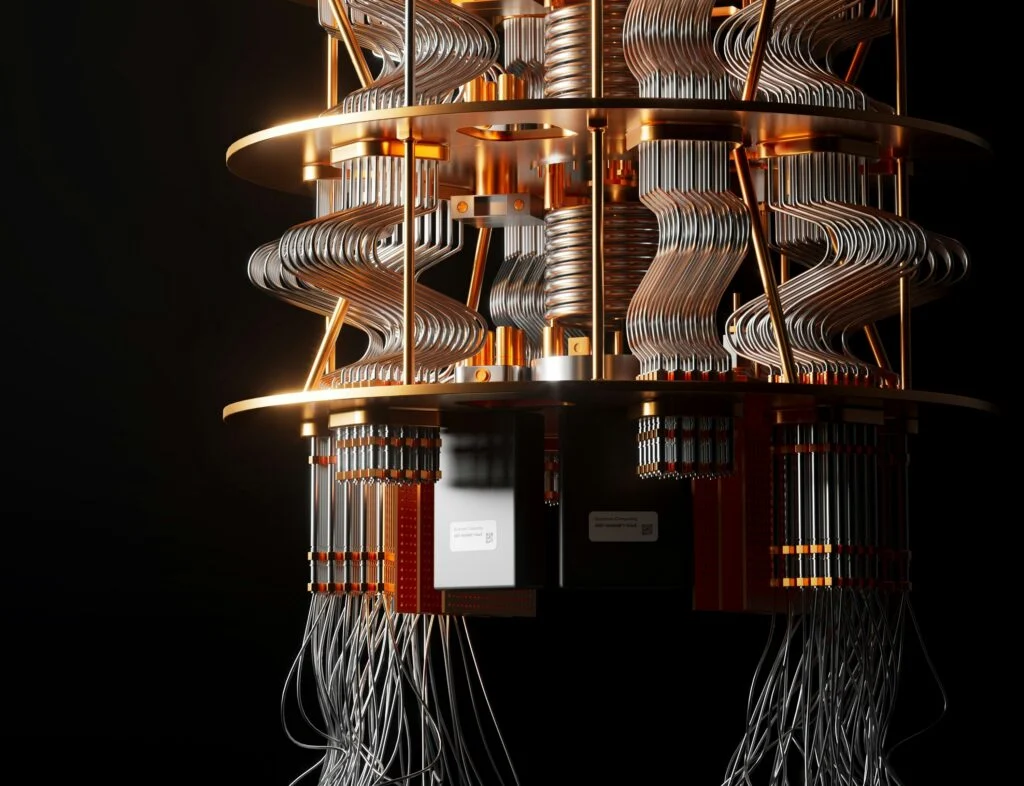

The Integration of Quantum Computing: MareNostrum-Ona

Beyond classical silicon, MareNostrum V represents a pioneer in the hybrid computing era. The facility has logically and physically integrated Spain’s first quantum computers. This includes a digital gate-based quantum system and the recently acquired MareNostrum-Ona, a state-of-the-art quantum annealer utilizing superconducting qubits.

Rather than viewing quantum units as replacements for classical CPUs, the BSC utilizes these Quantum Processing Units (QPUs) as specialized accelerators. When the supercomputer encounters optimization problems or quantum chemistry simulations that are computationally "expensive" for even the H100 GPUs, it can offload these specific kernels to the quantum hardware. This hybrid approach positions MareNostrum V at the forefront of the next generation of scientific discovery.

Operational Reality: Security, Airgaps, and the SLURM Environment

Interacting with a €200 million machine requires adherence to strict operational protocols that differ significantly from standard server management. One of the most notable features of the MareNostrum V environment is the "airgap" security model. While researchers access the system via Secure Shell (SSH) through designated login nodes, the compute nodes themselves have no outbound internet access.

This airgapped environment presents a unique challenge for researchers accustomed to real-time library updates. Users cannot utilize commands like pip install or wget directly on the compute nodes. Instead, all software, datasets, and dependencies must be pre-downloaded, compiled, and staged within the user’s storage directory before a job is submitted. To assist with this, the BSC administrators maintain a comprehensive "module" system that provides pre-optimized versions of common scientific libraries.

The management of these resources is handled by the Simple Linux Utility for Resource Management (SLURM). SLURM acts as a workload manager, queuing jobs based on priority, resource availability, and the user’s allocated "CPU-hour" budget. Researchers must submit bash scripts—often referred to as "job scripts"—which detail the specific number of nodes, tasks, and "wall-time" required. If a simulation exceeds its requested wall-time by even a second, the scheduler terminates the process to ensure the machine remains available for the next scheduled researcher.

Scientific Case Study: CFD Simulations and Amdahl’s Law

The practical application of MareNostrum V is best illustrated through complex simulations such as Computational Fluid Dynamics (CFD). In recent research involving aerodynamic surrogate models, the supercomputer was used to process 50 high-fidelity CFD simulations across varied 3D meshes. Using the OpenFOAM framework, researchers were able to automate the data pipeline by chaining SLURM jobs together, allowing for the simultaneous processing of multiple aerodynamic evaluations.

However, the utilization of such a machine is governed by Amdahl’s Law, which defines the theoretical limits of parallelization. Amdahl’s Law states that the speedup of a program is limited by the portion of the code that must remain serial. For example, if 5% of a program is fundamentally sequential, the maximum speedup achievable is 20x, regardless of how many thousands of cores are applied to the task. Furthermore, increasing the number of cores beyond a certain point can lead to diminishing returns due to the "communication overhead"—the time processors spend talking to each other rather than performing calculations.

Funding and Strategic Importance

The development of MareNostrum V is a collaborative effort under the EuroHPC Joint Undertaking, with significant contributions from a consortium of nations including Spain, Portugal, and Turkey. This investment is part of a broader European strategy to achieve "digital sovereignty" by reducing dependence on non-European computing infrastructure and ensuring that European researchers have access to the world’s most advanced tools.

Mateo Valero, Director of the Barcelona Supercomputing Center, has frequently emphasized that such machines are essential for addressing "the great challenges of humanity," from predicting the effects of climate change to developing new personalized medical treatments. The inauguration of the system was met with praise from European Commission officials, who noted that MareNostrum V would be instrumental in the "AI for Science" movement, enabling European startups and researchers to train massive models locally.

Conclusion: A Public Resource for the Future of Science

Despite its immense cost and technical complexity, MareNostrum V remains a publicly funded scientific resource. Access is provided free of charge to researchers through competitive calls for proposals managed by the Spanish Supercomputing Network (RES) and the EuroHPC Joint Undertaking.

The transition from the 19th-century chapel to the quantum-integrated halls of MareNostrum V serves as a powerful metaphor for the progression of human knowledge. Behind the unremarkable blinking cursor of an SSH terminal lies a distributed network capable of performing hundreds of quadrillions of calculations per second. As Europe moves deeper into the era of exascale computing, MareNostrum V stands not just as a machine, but as a critical infrastructure for the next century of scientific and technological advancement.