The global expansion of Large Language Models (LLMs) has transitioned from a phase of novel experimentation to one of foundational infrastructure, yet a significant gap remains between high-level user interaction and the low-level architectural decisions that govern model efficiency. While the majority of the public interacts with polished Application Programming Interfaces (APIs)—inputting prompts and receiving instantaneous responses—the underlying structural choices regarding speed, cost, and capability are often obscured. As the industry moves toward more specialized, fine-tuned, and efficient models, understanding the nuances of LLM architecture has become a prerequisite for engineers and researchers looking to optimize these systems for production environments. Recent technical audits of GPT-2-style architectures have highlighted several non-obvious design choices, from rank stabilization in fine-tuning to the strategic exclusion of certain layers during quantization, that determine whether a model succeeds or fails at scale.

The Evolution of Fine-Tuning: From LoRA to Rank Stabilization

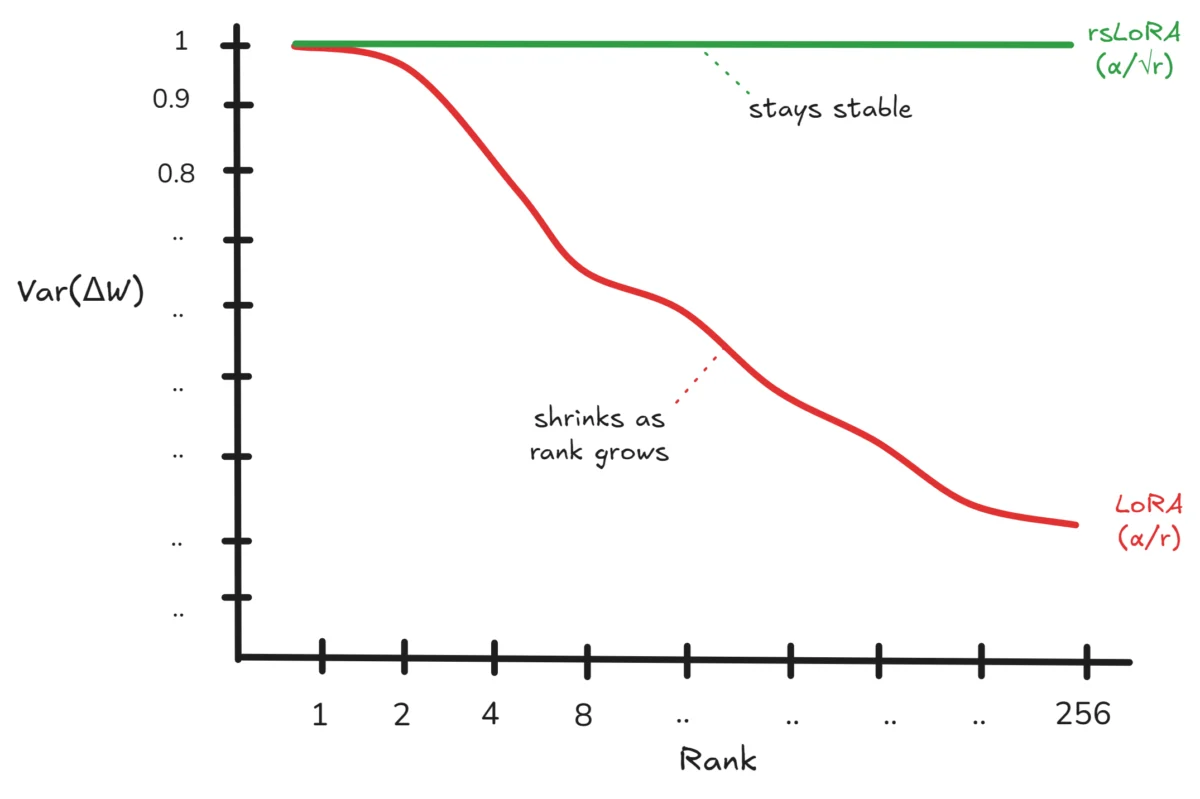

Low-Rank Adaptation, or LoRA, has become the industry standard for fine-tuning massive models due to its ability to reduce trainable parameters by over 99%. By freezing the original weights of a model and training only two smaller, low-rank matrices (B and A), developers can adapt a model to specific tasks with minimal computational overhead. However, as practitioners have attempted to scale the "rank" (r) of these adapters to capture more complex information, a significant performance bottleneck has emerged. Technical analysis reveals that in standard LoRA implementations, the scaling factor—defined as the ratio of alpha to rank—can inadvertently diminish the importance of weight updates as the rank increases.

Statistical evaluations conducted by researchers such as Kalajdzievski have demonstrated that when matrices B and A are randomly initialized using normal distributions, the variance of their product increases proportionally with the rank. Consequently, when the update is divided by the rank (as in the standard LoRA formula), the variance of the total weight change (ΔW) decreases. This results in shrinking weight updates that render the fine-tuning process less effective as the rank grows. To counteract this, the industry is shifting toward Rank-Stabilized LoRA (RsLoRA), which replaces the rank divisor with its square root. This adjustment ensures that the variance remains constant, allowing the magnitude of weight updates to remain stable regardless of the rank. This architectural shift is critical for enterprises attempting to fine-tune models on highly specialized datasets where a higher rank is necessary to capture subtle patterns without losing the influence of the learned parameters.

Positional Embeddings: The Transition to Rotary Dynamics

The methodology by which a model understands the order of words—positional embedding—has undergone a radical transformation since the debut of the Transformer architecture in 2017. The original "Attention Is All You Need" paper utilized Sinusoidal Positional Embeddings, a fixed-formula approach that required no trainable parameters. While efficient, this method provided only absolute positioning and was directly added to token embeddings, potentially distorting the original semantic information. Subsequent models, including GPT-2 and GPT-3, moved toward Learned Positional Embeddings, where the network identifies positional relationships through backpropagation. While this improved flexibility, it introduced a significant parameter load and continued the problematic practice of direct addition to token embeddings.

The emergence of Rotary Positional Embeddings (RoPE) has resolved these historical conflicts and has been adopted by modern flagship models like Llama and Mistral. RoPE functions by rotating the Query and Key matrices based on their sequence position and frequency, rather than adding values to the embeddings. This "zero-parameter" approach ensures that the original token information remains pristine while enabling the model to handle relative positions more effectively. Furthermore, RoPE has proven to be instrumental in extending context windows, as its mathematical properties allow for better extrapolation to sequence lengths longer than those seen during initial training. This shift marks a move toward "spatial awareness" in LLMs that is both more mathematically elegant and computationally efficient.

The Scaling Paradox and the Obsolescence of Weight Tying

In the early stages of LLM development, "Weight Tying" was a celebrated optimization technique. By sharing weights between the token embedding layer and the output projection head, models like GPT-2 and BERT could save a significant percentage of their total parameters. In a 124-million parameter model, weight tying can save approximately 38 million parameters—a 30% reduction that was vital when hardware constraints were more severe. The logic was intuitive: if the embedding layer maps tokens to vectors and the output head maps vectors back to tokens, the matrices should theoretically be transposes of one another.

However, as models have scaled into the billions of parameters, the utility of weight tying has plummeted. In a 70-billion parameter model, the 38 million parameters saved represent less than 0.05% of the total architecture, rendering the optimization practically negligible. Furthermore, modern research suggests that keeping these weights separate allows the output head to specialize independently, leading to better performance in complex linguistic tasks. Consequently, modern architectures like Falcon and Mistral have largely abandoned weight tying, prioritizing representational power over minor parameter savings. This evolution reflects a broader trend in AI: as compute and memory availability increase, optimizations that compromise the "freedom" of individual layers are being phased out.

Stability vs. Performance: The LayerNorm Tug-of-War

The placement of Layer Normalization (LayerNorm) within the Transformer block is a primary factor in training stability. The original Transformer used "Post-LN," where normalization occurred after the residual addition. While this can lead to higher peak performance, it is notoriously difficult to train because it often leads to vanishing or exploding gradients in deep networks, requiring meticulously tuned learning rate schedules.

Starting with GPT-2, the industry pivoted toward "Pre-LN," where normalization occurs inside the residual block, before the attention and feed-forward layers. This choice prioritizes training stability, allowing for the construction of much deeper models without the risk of numerical instability. While some researchers argue that Pre-LN slightly limits the model’s ultimate representational capacity, the trade-off is almost universally accepted in production environments. Recent innovations like RMSNorm (Root Mean Square Normalization) have further refined this process by removing the mean-centering operation, providing a faster and more memory-efficient alternative that maintains the stability benefits of the Pre-LN approach.

Overcoming the Memory Wall: KV-Caching and TurboQuant

Inference speed is perhaps the most visible metric of LLM performance. Because LLMs generate text autoregressively—one token at a time—the model must theoretically look back at every previous token to generate the next one. Without optimization, the Key (K) and Value (V) matrices for every previous token would need to be recomputed at every single step, leading to a quadratic increase in time complexity (O(T²)). The implementation of KV-Caching solves this by storing the K and V matrices in memory as they are computed. Each new token only needs to compute its own K and V values, reducing the time complexity to linear (O(T)).

However, KV-Caching introduces a "Memory Wall." The cache consumes memory proportional to the number of layers, the sequence length, and the model’s hidden dimension. For long-context applications, the KV-cache can easily exceed the available VRAM on a single GPU, even if the model weights themselves fit. This has led to a surge in research regarding cache compression. A 2026 breakthrough by Google Research, titled TurboQuant, introduced a method to compress the KV-cache to a mere 3 bits per value using online vector quantization and Lloyd-Max Quantization. This technique achieves a 5x to 6x reduction in memory consumption with virtually no loss in accuracy, effectively allowing massive contexts to be handled on consumer-grade hardware and unclogging the primary bottleneck in modern LLM serving.

The Precision Paradox in Quantization

To deploy LLMs at scale, developers use quantization—the process of reducing the numerical precision of model weights from 32-bit floating points (FP32) to 8-bit or 4-bit integers (INT8/INT4). This drastically reduces the memory footprint and accelerates inference. However, a critical discovery in LLM engineering is that quantization cannot be applied uniformly across all layers.

LayerNorm is almost always skipped during INT8 quantization and kept at full precision. The reasoning is twofold: first, LayerNorm layers contain a negligible number of parameters compared to the massive weight matrices in the attention and feed-forward layers, meaning the memory savings from quantizing them are minimal. Second, LayerNorm is highly sensitive to precision loss. Because it involves calculating means and variances, reducing the precision to 8 bits can introduce significant rounding errors that propagate through the rest of the network, spoiling the model’s output quality. This "precision paradox" teaches a vital lesson in AI infrastructure: not all parameters are created equal, and the most sensitive parts of the architecture must be protected to maintain the integrity of the whole.

Chronology of Transformer Architectural Innovations

The development of these architectural features follows a clear timeline of increasing efficiency and stability:

- 2017: Publication of "Attention Is All You Need," introducing the Transformer, Sinusoidal Positional Embeddings, and Post-LayerNorm.

- 2019: GPT-2 popularizes the Pre-LayerNorm approach and demonstrates the effectiveness of Weight Tying for mid-sized models.

- 2021: Introduction of LoRA for efficient fine-tuning and RoPE for rotary positional dynamics, marking a shift toward more flexible architectures.

- 2022: The release of LLM.int8() establishes the standard for mixed-precision quantization in large-scale deployments.

- 2023: The identification of the LoRA rank-diminishing problem leads to the proposal of RsLoRA (Rank-Stabilized LoRA).

- 2025-2026: Emerging technologies like TurboQuant begin to address the KV-cache memory bottleneck, enabling "massive context" processing on single-chip systems.

Industry Implications and Future Outlook

The transition from "black box" API usage to deep architectural understanding marks the maturation of the AI field. For enterprises, these design choices represent the difference between a model that is too expensive to run and one that provides a sustainable competitive advantage. The move toward Rank-Stabilized LoRA and 3-bit KV-caching indicates that the future of LLMs lies not just in "scaling up" with more parameters, but in "scaling in"—optimizing the internal mechanics to do more with less.

Furthermore, the democratization of these architectural insights allows smaller players to build and fine-tune models that were previously the sole domain of tech giants. As engineers continue to confront the trade-offs between stability and performance, the industry is likely to see further specialization, where model architectures are custom-tailored for specific hardware constraints and task requirements. The "invisible architecture" of LLMs is becoming visible, and with it, the path to more efficient, accessible, and powerful artificial intelligence.