The landscape of artificial intelligence is currently witnessing a fundamental shift in how Large Language Models (LLMs) interact with massive datasets, moving away from the restrictive limitations of small context windows toward an era of expansive digital memory. For several years, the standard operating procedure for Retrieval-Augmented Generation (RAG) was dictated by necessity: developers were forced to fragment documents into minute segments, convert them into vector embeddings, and retrieve only the most statistically relevant snippets. This "chunking" approach was the only viable path when models like the original GPT-3 or early iterations of Llama were constrained by context windows ranging from 4,000 to 32,000 tokens. However, the release of next-generation models, such as Google’s Gemini 1.5 Pro and Anthropic’s Claude 3.5 Opus, has shattered these boundaries, offering context windows that can accommodate one million to two million tokens. While this technological leap suggests that one could theoretically input an entire library of technical manuals into a single prompt, it has simultaneously introduced a new set of complexities regarding computational efficiency, cost management, and the cognitive "attention" of the models themselves.

The Evolution of Context: A Chronological Perspective

To understand the current state of RAG, one must look at the rapid progression of context window capacities over the last twenty-four months. In early 2023, a 32,000-token window was considered state-of-the-art, forcing developers to focus heavily on the "retrieval" aspect of RAG to ensure the model never saw irrelevant data. By late 2023, GPT-4 Turbo expanded this to 128,000 tokens, allowing for the inclusion of several long documents. The paradigm shift reached its zenith in 2024 with the introduction of Gemini 1.5, which successfully demonstrated the ability to process up to two million tokens—roughly equivalent to several hours of video or thousands of pages of text—in a single pass.

Despite these advancements, the "brute force" approach of stuffing a million tokens into a prompt is rarely the most effective strategy. Industry benchmarks and empirical studies have shown that as context length increases, the "signal-to-noise" ratio often degrades. This has led to the development of sophisticated long-context RAG architectures that combine the best of both worlds: the massive capacity of modern LLMs and the precision of traditional retrieval systems.

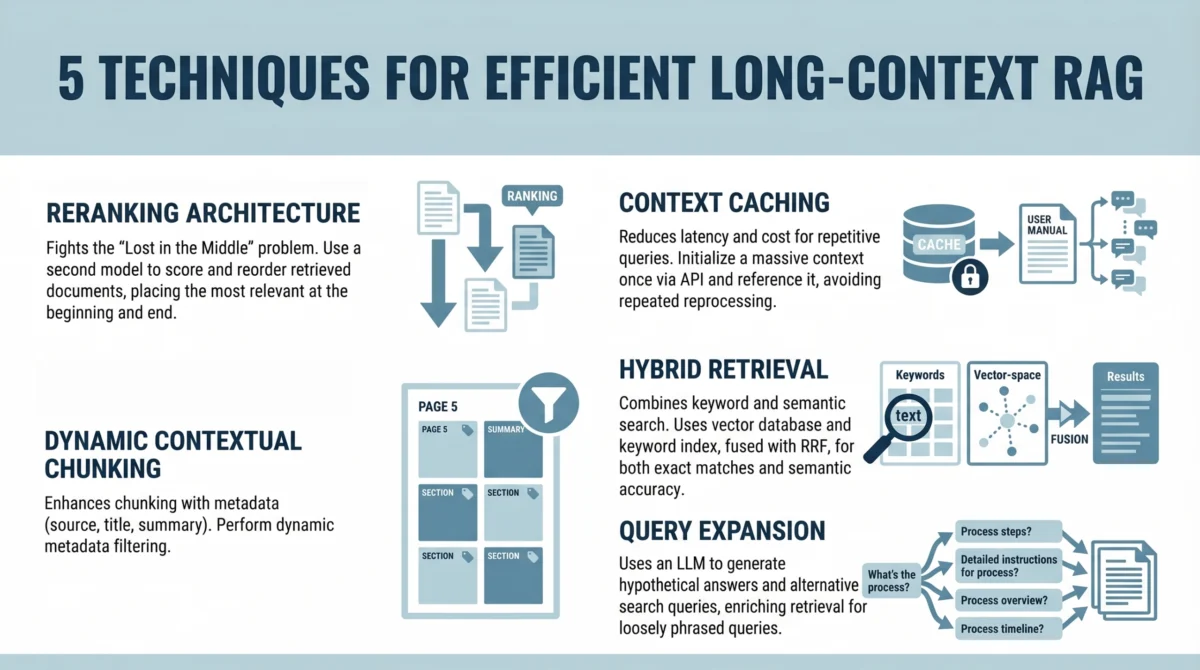

Addressing the Attention Deficit: The Reranking Solution

One of the most significant challenges identified in long-context processing is the "Lost in the Middle" phenomenon. A seminal 2023 study conducted by researchers at Stanford University, the University of California, Berkeley, and the University of California, San Diego, revealed a critical flaw in how LLMs utilize their context. The study found that models are highly adept at identifying information at the very beginning or the very end of a prompt, but their ability to retrieve and reason with information buried in the middle of a large context block drops significantly.

To combat this, modern RAG architectures have integrated a "reranking" tier. In this workflow, an initial retrieval system (often a fast bi-encoder) pulls the top 50 to 100 relevant document chunks from a database. Instead of passing these directly to the LLM, a more computationally intensive "cross-encoder" or reranker model evaluates the candidates. This reranker assigns a new relevance score, and the system then reorders the documents so that the most critical information is placed at the "poles" of the prompt—the beginning and the end. By strategically manipulating the placement of data, developers can ensure that the LLM’s attention mechanism is focused on the most pertinent facts, effectively bypassing the performance dip associated with long-form context.

Financial and Latency Implications: The Role of Context Caching

While the technical capacity to process a million tokens exists, the financial implications are staggering for enterprise-scale applications. Processing a million tokens in a single query can cost upwards of several dollars, depending on the provider’s pricing model. Furthermore, the "Time to First Token" (TTFT)—the delay between a user asking a question and the model beginning its response—increases as the model has to "read" more data.

To mitigate these costs and delays, major AI providers have introduced "Context Caching." This technique allows developers to pre-process and store a large, static knowledge base (such as a company’s entire legal archive or a software project’s documentation) on the model provider’s servers. When a user asks a query, the model does not re-read the entire million-token corpus; instead, it refers to the "cached" state of that data.

Data from early adopters suggests that context caching can reduce costs by as much as 90% for repetitive queries and slash latency by over 80%. This makes long-context RAG viable for real-time applications like customer support chatbots, where a model must reference a massive but infrequently changing set of instructions or product details.

Precision Through Dynamic Contextual Chunking and Metadata

Even with a million-token window, "noise" remains the enemy of accuracy. If a model is fed 500,000 tokens of irrelevant data alongside 500 tokens of the correct answer, the risk of "hallucination"—where the model generates plausible but false information—increases. The third pillar of efficient long-context RAG is dynamic contextual chunking.

Unlike traditional chunking, which breaks text at arbitrary character counts, dynamic chunking uses the LLM itself to identify logical semantic breaks. Furthermore, each chunk is enriched with metadata—tags that identify the document’s author, creation date, department, or specific keywords. When a query is made, the system uses these metadata filters to prune the search space before the LLM ever sees the data. This hybrid approach ensures that the context provided to the model is not just long, but highly curated, combining the breadth of a large window with the surgical precision of filtered retrieval.

Hybrid Retrieval: Merging Semantic and Lexical Search

A common pitfall in modern AI development is the over-reliance on "semantic search" (searching by meaning) at the expense of "lexical search" (searching by exact keywords). While vector-based semantic search is excellent for understanding intent—knowing that a user asking about "fire safety" might want to see documents about "smoke detectors"—it often fails on technical specifics, such as part numbers, legal citations, or rare medical terminology.

The most robust long-context systems now employ "Hybrid Retrieval." This method runs two simultaneous searches: a vector search for conceptual relevance and a traditional BM25 keyword search for exact matches. The results are then fused using techniques like Reciprocal Rank Fusion (RRF). This ensures that if a technician asks for "Protocol 88-B," the system finds that exact string rather than just providing general documents about "protocols." For long-context models, this hybrid approach provides a diverse set of high-quality inputs that cover both the "gist" and the "specifics" of the user’s request.

Bridging the Language Gap with Query Expansion

Often, the way a user phrases a question does not match the way the answer is written in the documentation. A user might ask, "How do I fix a blank screen?" while the technical manual describes "troubleshooting unresponsive display output." Query expansion, or "Summarize-Then-Retrieve," uses a lightweight, inexpensive LLM to generate multiple versions of the user’s query before the retrieval process begins.

One popular method is Hypothetical Document Embeddings (HyDE), where the model generates a "fake" answer to the user’s question first. The system then uses that fake answer to search the actual database. This technique is particularly effective in long-context RAG because it helps the retriever find the most relevant "needles" in the million-token "haystack" by matching the professional terminology likely found in the source documents.

Analysis of Broader Implications

The shift toward long-context RAG represents more than just a technical upgrade; it is a fundamental change in the economics of information. For enterprises, the ability to feed an LLM an entire codebase or a decade’s worth of financial reports without losing the "thread" of the conversation opens new frontiers in automated auditing, legal discovery, and complex research.

However, industry analysts warn that these capabilities must be balanced with data privacy and security. As context windows grow, the amount of sensitive data being sent to cloud-based LLM providers increases exponentially. This has sparked a secondary trend: the rise of "on-premise" or "private cloud" long-context models, where companies like Mistral or Meta (with Llama 3) provide weights that can be hosted locally, ensuring that million-token "knowledge dumps" remain within the corporate firewall.

Furthermore, the "Needle in a Haystack" (NIAH) tests—the current industry standard for measuring context retrieval—are being criticized for being too simple. While a model might be 99% accurate at finding a single fact in a million tokens, its ability to perform "multi-hop reasoning"—connecting a fact on page 10 with a fact on page 900,000—is still an area of active research and development.

Conclusion

The emergence of million-token context windows has not rendered RAG obsolete; rather, it has matured the field. The goal of a developer is no longer to fit as much data as possible into a tiny box, but to manage a massive stream of information in a way that is cost-effective, fast, and intellectually coherent for the model. By implementing reranking to fight attention loss, leveraging caching for cost efficiency, and using hybrid search for precision, organizations can build AI systems that don’t just "read" more, but "understand" better. As these techniques continue to evolve, the barrier between human knowledge and machine utility will continue to thin, paving the way for AI agents that possess a truly comprehensive and actionable memory.