The landscape of data science is undergoing a fundamental shift as Large Language Models (LLMs) transition from simple code generators to integrated workflow orchestrators. This evolution is being driven by the emergence of standardized frameworks like the Model Context Protocol (MCP) and the implementation of modular "Skills"—reusable packages of instructions designed to handle recurring complex tasks with high reliability. As organizations seek to maximize the productivity of their data teams, these technical advancements are proving critical in reducing the time spent on repetitive manual processes, such as data cleaning, visualization, and reporting.

The Architecture of AI Skills in Modern Development

In the context of modern AI-assisted development environments like Claude Code or Codex, a "Skill" represents a significant departure from traditional prompting. While a standard prompt is often a one-off instruction, a Skill is a structured, reusable package. At its core, a Skill consists of a SKILL.md file, which contains essential metadata—specifically a name and a detailed description—alongside rigorous instructions for the AI.

The technical advantage of this approach lies in context window management. One of the primary constraints of current LLMs is the "context window," or the amount of information the model can process at one time. By utilizing Skills, developers can keep the main context window lean. The AI initially only loads the lightweight metadata; it chooses to ingest the full instructions and bundled resources, such as scripts, templates, and examples, only when it identifies the Skill as relevant to the current task. This "just-in-time" loading of complex instructions ensures that the model remains focused and accurate without being overwhelmed by irrelevant data.

Case Study: Automating a Multi-Year Visualization Project

To understand the practical implications of this technology, one can examine the automation of long-term data projects. Since 2018, industry practitioners have documented a trend toward "slow data," where individuals or teams produce consistent weekly visualizations to hone data intuition. For many, this process historically required approximately 60 minutes of manual labor per week, involving data querying, cleaning, analysis, and visual design.

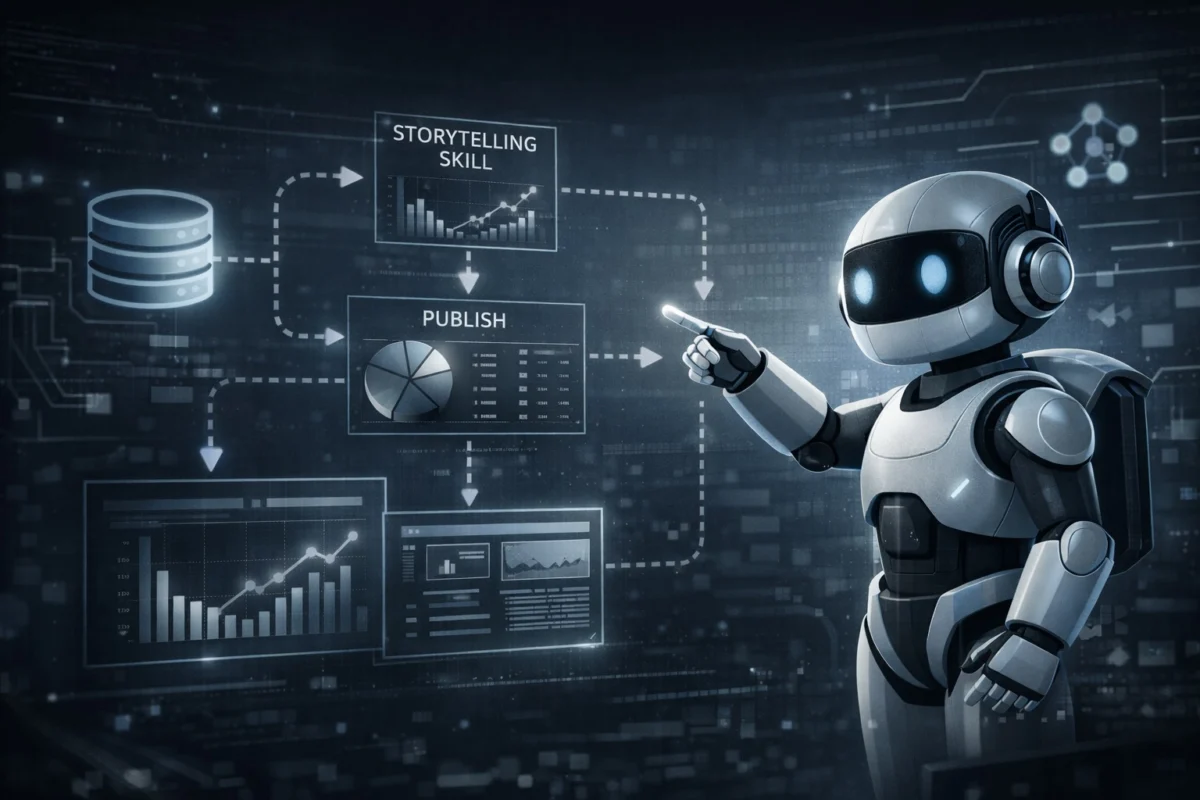

By implementing a Skill-based workflow, this hour-long routine has been compressed into less than 10 minutes, representing an 83% increase in temporal efficiency. The automation of this workflow typically involves two primary Skills:

- The Storytelling-Viz Skill: This focuses on identifying insights within a dataset and recommending the most effective chart types based on the data’s narrative requirements.

- The Publishing Skill: This automates the formatting and deployment of the final visualization to various platforms or internal reports.

In a recent demonstration of this technology, a developer utilized an Apple Health dataset stored in a Google BigQuery database. By invoking a visualization skill through a desktop AI interface, the system was able to query the database, surface a specific insight regarding the correlation between annual exercise time and caloric expenditure, and generate a clean, interactive visualization with an insight-driven headline—all with minimal human intervention.

The Chronology of Skill Development

The transition from a manual workflow to an automated, Skill-driven process follows a distinct four-stage evolution:

Phase 1: Manual Foundation (2018–2023)

During this period, data scientists relied on traditional Business Intelligence (BI) tools such as Tableau or Python libraries like Matplotlib and Seaborn. The process was entirely manual, requiring the practitioner to perform every step from SQL querying to aesthetic polishing.

Phase 2: The Introduction of MCP (Early 2024)

The Model Context Protocol (MCP) was introduced as an open standard to enable LLMs to connect seamlessly to external data sources and tools. This allowed AI models to read directly from databases like BigQuery or Slack, removing the "copy-paste" barrier that previously hindered automation.

Phase 3: Skill Bootstrapping (Mid 2024)

Developers began using AI to build the very Skills intended to automate their work. By describing a workflow to a model, the AI could "bootstrap" the initial version of a SKILL.md file, effectively creating a specialized agent for a specific task.

Phase 4: Iterative Optimization (Present)

The current phase involves refining these Skills through "Human-in-the-Loop" feedback. This includes integrating personal style preferences, researching external visualization best practices, and testing the Skill against diverse datasets to ensure robustness.

Technical Implementation and Optimization Strategies

Building a high-performing AI Skill is an iterative engineering task. According to technical documentation and developer experiences, the first version of a Skill typically achieves only about 10% of the desired quality. Reaching professional-grade output requires three specific enhancement strategies:

Integration of Domain Knowledge

Generic LLMs often produce "stock" visualizations that lack professional polish. To bridge this gap, developers provide the Skill with specific style guides and examples of past successful work. By summarizing these principles into the Skill’s instructions, the AI can replicate a specific "brand voice" or visual identity consistently.

External Resource Synthesis

Robust Skills are not built in isolation. Developers often instruct the AI to research external visualization strategies from established design sources. This incorporates diverse perspectives on data density, color theory, and accessibility that a single developer might overlook.

Empirical Testing and Edge Case Management

Testing a Skill against 15 or more varied datasets is considered a benchmark for reliability. This process helps identify common failure points, such as:

- Inconsistent Formatting: Ensuring the AI always uses specific fonts or color palettes.

- Missing Context: Forcing the AI to include data sources and caveats in every output.

- Visual Clutter: Implementing rules to prevent overlapping labels or excessive grid lines.

Supporting Data: The Impact of Automation on Data Science

The shift toward AI Skills addresses a long-standing bottleneck in the data science industry. According to the "State of Data Science" report by Anaconda, data scientists traditionally spend about 37% of their time on data preparation and 16% on data visualization. These tasks are often cited as the most repetitive and least rewarding aspects of the job.

By modularizing these tasks into Skills, organizations can theoretically reclaim over 50% of a data scientist’s work week. Furthermore, the use of MCP ensures that these automations are secure and scalable, as the protocol provides a standardized way to manage permissions and data access across different cloud environments.

Broader Implications for the Future of Data Science

The rise of AI Skills and MCP suggests a fundamental change in the role of the data scientist. The profession is moving away from "manual execution"—writing the same cleaning scripts or visualization code repeatedly—toward "workflow architecture." In this new paradigm, the data scientist’s primary value lies in their ability to design, test, and refine the Skills that perform the execution.

Industry analysts suggest that this modular approach will lead to the creation of "Skill Marketplaces." Platforms like skills.sh are already emerging, allowing developers to share and download optimized instruction packages for everything from financial modeling to genomic data analysis. This democratization of expertise could significantly lower the barrier to entry for complex data tasks.

However, the human element remains irreplaceable in the "Discovery" phase of data science. While AI can automate 80% of the process—the querying, the formatting, and the initial analysis—the final 20%, which involves data intuition, storytelling, and the ethical interpretation of results, still requires human oversight. As one practitioner noted, the goal of automation is not to remove the human from the process, but to remove the "drudgery," allowing the professional to focus on seeing the world through the lens of data rather than fighting with the tools used to view it.

Conclusion

The integration of MCP and AI Skills represents a sophisticated leap in how technical professionals interact with machine learning models. By treating instructions as reusable, modular code, data scientists can build robust systems that handle complexity with unprecedented speed. As these protocols become more standardized, the ability to build and maintain a "library of skills" will likely become a core competency for any data professional operating in the AI era. This transition promises a future where data-driven insights are generated more rapidly, more consistently, and with a higher degree of professional rigor than ever before.